The Amazon Outage Is a Warning. Is Your AI Agent Flying Blind?

Mar 16, 2026

On March 5, 2026, Amazon’s website and shopping app went down. Customers couldn’t check out, prices disappeared, and account pages failed to load. For hours, the world’s most visited storefront was effectively offline.

The cost was immense with an estimated 99% fall in the North American marketplace activity, or 6.3 million lost orders. While Amazon attributed the disruption to a “software code deployment,” internal reports identified a more systemic culprit: AI-assisted changes implemented without established safeguards. Amazon is not the first and it will not be the last.

Across organizations, we have all felt the push to adopt new AI OKRs and increase business efficiency. In software engineering, this has produced incredible throughput increases, with McKinsey reporting productivity increase of 20-45% for teams that adopted AI coding tools early.

However, this revolution in how we write code has incurred a massive stability debt. Google’s 2025 DORA report, noted a concerning 10% increase in software instability reported alongside AI adoption. This article explores how Runtime-aware development, a strategy grounding AI agents in execution-level reality can prevent these high-impact incidents from occurring.

The Governance Gap (Velocity vs. Incidents)

The greatest predictor of an outage is change, with production incidents frequently traced back to a specific code modification. The greater the rate of change, the higher the risk that an error will occur; if governance does not increase at the same rate as velocity, this risk increases unchecked.

In the era of human engineering, we balanced the Velocity of change with Governance (peer review, manual testing, and staging). But AI-acceleration has fundamentally altered this risk equation.

We can now model the risk of any automated action using the following formula: Velocity multiplied by Blast Radius, divided by the effectiveness of your Governance.

Backed by AI coding agents like Cursor, GitHub Copilot, and others, we have optimized for velocity, merging PRs faster than ever before. But this velocity has been achieved without an ability to fully understand the potential blast radius and without sufficient measures to boost the effectiveness of our governance. As a result, the risk potential is increasing.

This leads to the Productivity Paradox.

The Productivity Paradox: Why Senior Sign-Offs Fail

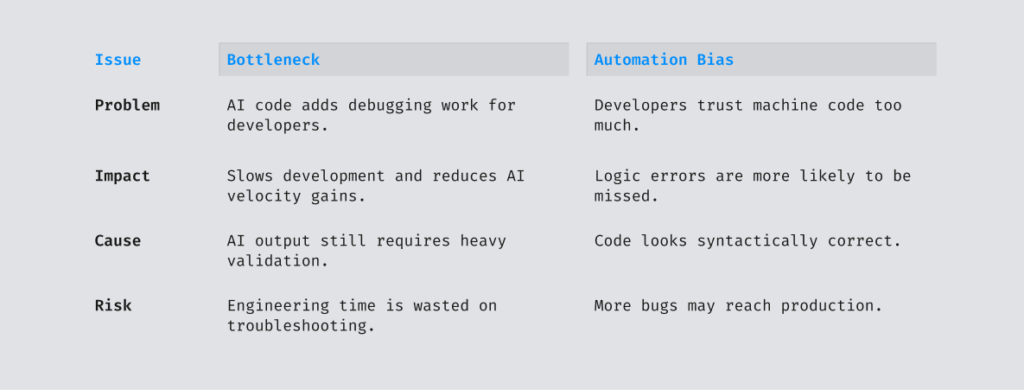

The response to such outages is to mandate senior engineer oversight for all AI-assisted changes. While a prudent immediate safeguard, it creates a massive bottleneck without clear connection to improved outcomes:

- The Bottleneck: It adds significant toil to your most expensive talent, completely negating the velocity gains AI was supposed to provide.

- Automation Bias: Humans are statistically less likely to catch logic-based errors in machine-generated code, which can contain significantly more logic flaws than human code, because the output looks syntactically perfect.

We cannot solve a machine-speed problem with a manual-speed process. This “velocity-to-incident” chain catches companies like Amazon, and is the primary threat to any organization scaling AI automation today.

The Shift to Non-Deterministic Failure

While governance is about how fast we move, the second risk is the nature of the code itself. Traditional software development was deterministic. A human developer had a clear intent and wrote specific lines of code they knew would generate a required output.

Faced with the same challenge twice, a human engineer will produce more or less the same logic each time.

AI agents work on an entirely different methodology. They are probabilistic. They do not follow a static rulebook; instead, they calculate the highest probability path to reach a goal. An agent can write a slightly different solution to the same challenge every single time it is asked.

This introduces a category of “unknown-unknowns” into our running systems. While AI agents accelerate code generation, they lack the human developer’s inherent understanding of how that code will fit into the live environment. Software is becoming easier to write, but harder to understand once it runs.

The Risks Realized: Amazon Postmortem

On March 10, 2026, Amazon apparently convened an engineering “deep dive” to address a trend of incidents with a “high blast radius”.

- March 2, 2026: The system displayed incorrect delivery times to customers at checkout, leading to 120,000 abandoned orders and 1.6 million website errors.

- March 5, 2026: Transactions could not complete, prices were not displayed, and items were missing from marketplaces. This was reportedly triggered by an engineer following inaccurate advice inferred by an AI agent from an outdated internal wiki.

In both incidents, the bug was discovered in production. It could have been identified hours earlier, but the AI agents were making decisions that were hypothetically optimal in a vacuum, yet operationally disastrous in the real world. To understand why, we have to look at the awareness levels that we have given to AI coding agents.

The Three Levels of AI Awareness

To understand the context gap that leads to these failures, we have to look at how an AI agent views your system.

There are three distinct levels of awareness required for safe development:

- Local Context: Visibility of the immediate file. This is great for syntax and logic but blind to the rest of the architecture.

- Global Context: Awareness of the entire repository. This enables architectural consistency but remains static. This reflects what the code is, not how it behaves.

- Runtime Context: The ground truth of the live, running application. This provides the variables, call stacks, and real traffic patterns necessary to move from probabilistic guessing to deterministic validation.

Without Level Three, AI agents are forced to navigate by an idealized map that rarely matches the actual road.

The Risks Realized: Amazon Postmortem

On March 10, 2026, Amazon apparently convened an engineering “deep dive” to address a trend of incidents with a “high blast radius.”

- March 2, 2026: Incorrect delivery times were shown, leading to 120,000 abandoned orders and 1.6 million website errors.

- March 5, 2026: A total storefront blackout reportedly triggered by an engineer following inaccurate advice inferred by an AI agent from an outdated internal wiki.

In both incidents the bug was discovered in production, but it could have been identified hours earlier before the incident occurred. The AI agent just needs access to runtime insights during the authoring phase.

Without it, agents are “flying blind,” making decisions that are hypothetically optimal in a vacuum but operationally disastrous in the real world.

The Evolution: Runtime Aware Development

To safely harness AI speed, we have to adopt runtime aware development. We can connect the AI coding agent’s reasoning loop directly to the runtime across all environments, from QA and Staging to Pre-production, and our AI SRE (site reliability engineering) tools to confirm that these changes do not negatively impact downstream dependencies and third-party integrations.

1. Mitigating Probabilistic Risk

By giving AI agents visibility to runtime awareness they can preview and simulate the runtime impact of a change before it reaches scale. It allows the AI agent to ask:

“If I apply this logic to the current live traffic pattern, what happens to the call stack?” This “ground truth” feedback loop prevents hazardous hallucinations before they ever leave the authoring stage.

2. Scaling Proportional Governance

By moving to a runtime-aware model, we move from a reactive activity into proactive authoring. This provides the “machine-speed” governance required to match AI velocity. It ensures code validation is based on how the system actually works, not just how the “map” of the source code looks.

The Solution: Lightrun MCP and Production-Grade Engineering

Lightrun connects AI assistants directly to live software environments, acting as the interface between the AI brain and the live runtime.

Lightrun MCP enables AI-accelerated runtime aware development by:

- Simulating runtime impact: Enabling AI agents to preview and simulate exactly how a code change will behave using a read-only sandboxed running environment.

- Validating non-deterministic logic: Verifying AI-suggested changes and code optimizations against real-world data patterns before they reach scale.

- Mitigating outages early: Identifying “Sev2” incidents and logic errors at the authoring stage rather than during an active incident response.

- Empowering Agents with runtime context: Through our MCP, Lightrun provides AI agents with the real-time visibility to understand environmental variables, preventing destructive hallucinations that plague context-blind agents.

In the AI era, the critical capability isn’t just generating code faster. It’s seeing, preventing, and fixing what happens at runtime when that code meets reality.

Stop flying blind. Equip your AI agents with live runtime context today.